Dogfooding Is the New Code Review

AI agents can ship code faster than humans can review it. Correct code still needs human time to become good software.

Simon Willison, who has been working on Agentic Engineering Patterns, has a nice post on the blurred lines between vibe coding and agentic engineering.

The line that stuck with me:

I realized what I value more than the quality of the tests and documentation is that I want somebody to have used the thing.

This matches my experience as well.

AI agents can now ship code faster than any individual human can review it. The bottleneck is still humans: use it, notice what feels wrong, and decide what good looks like.

The implementation sandwich has been largely eaten in the middle by AI. You spend most of your time at the beginning and the end: thinking through what should exist, then checking whether the thing is actually good. The agent handles the implementation in the middle.

That changes where I want to spend my time. It’s worth spending more time upfront writing and designing the solution, because wrong thinking there leads to outsized wasted work later. Then you translate that into a spec or plan, hand it off to the agent, and use harness engineering to force validation: tests, end-to-end tests, external review agents, and whatever else proves the code is correct.

Harness engineering can prove the code is correct, not that the thing is good. That gets you 80% of the way there. The last 20% is on you.

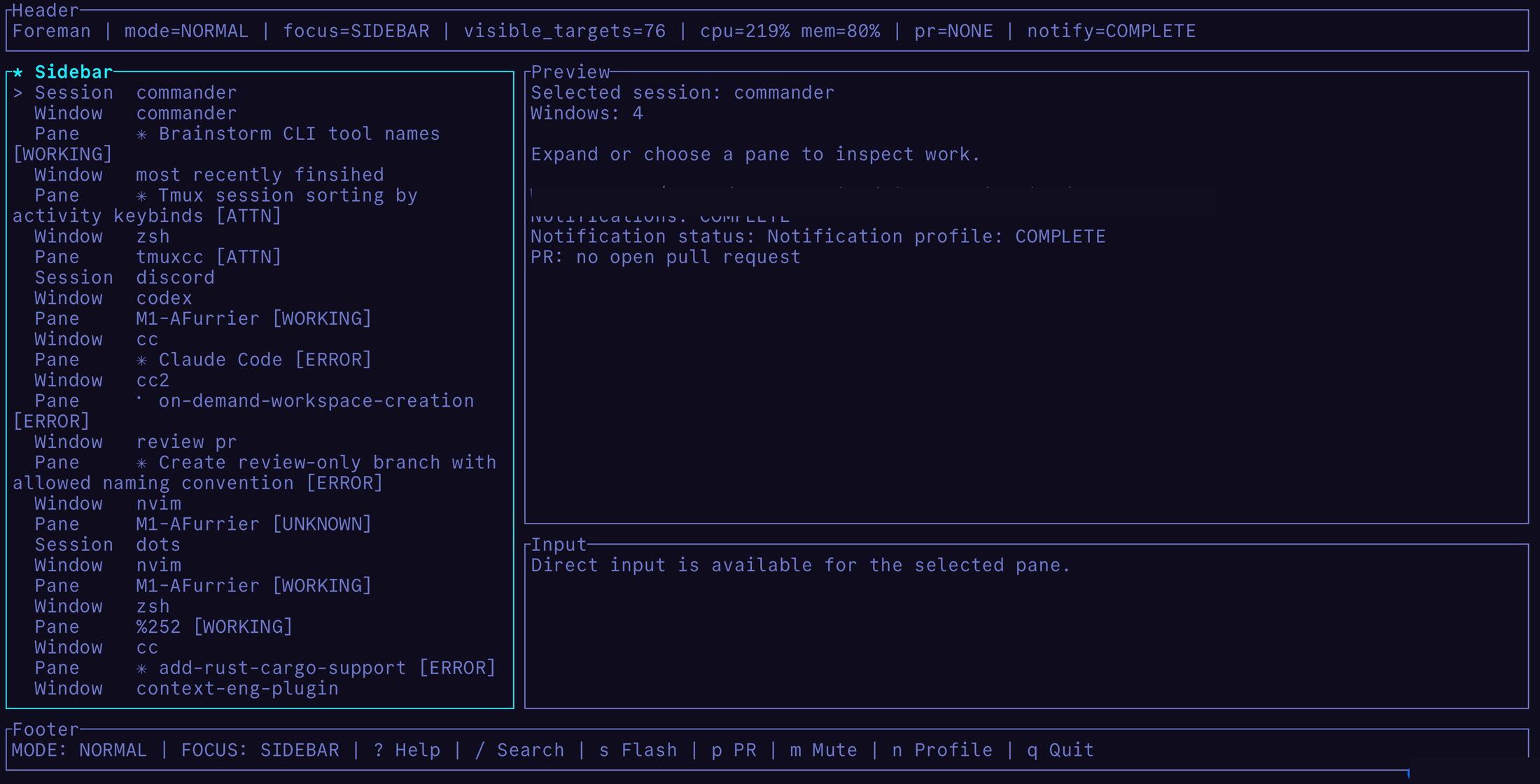

A personal example: I built a tmux-based agent control plane for myself. The design described a good UX, and the agent did the obvious implementation work: sessions, panes, statuses, previews, input. Even with screenshot validation, the first version was functionally correct and awful to use. It technically did what I asked. I only found the bad parts because this is a tool I use every day, so the rough edges kept hitting me until I fixed them.

Functionally correct code, awful software to experience.

Functionally correct code, awful software to experience.

Dogfooding is simple, but not easy. It takes time. You have to actually use the software, ideally every day, so the sharp edges, bad product decisions, and gaps in your tests have a chance to show up. Then you can pick them off one by one.

“I don’t like this, we should do it this way” is a thought you have while using the thing, not while reviewing thousands of lines of AI-generated code.

Agent time is correlated with code correctness. Human time is correlated with polish and trustworthiness.

Code correctness is largely solved. Good software is not.